EU AI Act Compliance Guide: What Every Business Must Know Before the August 2026 Deadline

Dispa - The AI Buff

Author

Ad Slot: leaderboard

Isi NEXT_PUBLIC_ADSENSE_CLIENT & AD_SLOTS

The countdown has begun. Businesses around the world now have fewer than five months to comply with the EU AI Act — the world’s first comprehensive, legally binding AI framework. The August 2026 deadline is fast approaching, and the stakes are higher than ever.

Non-compliance carries serious financial consequences. Companies in violation face fines of up to €35 million or 7% of global annual turnover — whichever is greater. That penalty structure is even more severe than GDPR. Moreover, regulators are not waiting years to act.

Ad Slot: in-feed

Isi NEXT_PUBLIC_ADSENSE_CLIENT & AD_SLOTS

Yet many businesses remain underprepared. Some organizations still don’t know which risk category their AI systems fall into. Others assume the Act doesn’t apply to them because they operate outside Europe. Furthermore, some teams have started compliance programs but lack clarity on the seven specific technical requirements they must meet.

“The EU AI Act is not just a European issue. Any company in the world that develops or deploys AI systems touching EU citizens must comply. The extraterritorial reach of this law is broader than most legal teams currently appreciate.”

— Dr. Kilian Gross, Head of AI Policy, European Commission (2025)

This guide is designed for business leaders, compliance officers, legal teams, CTOs, and product managers who need a clear, actionable roadmap. Whether your company builds AI products, deploys third-party AI tools, or simply uses AI in daily operations — this is everything you need to know.

By the end of this article, you will understand the risk classification system, the seven core compliance requirements, industry-specific obligations, the real cost of non-compliance, and a practical 90-day action plan. You will also find answers to the most common questions teams are asking right now.

Let’s start with the foundation.

What Is the EU AI Act? The World’s First Comprehensive AI Law

A Brief History and Why It Matters

The European Union formally adopted the EU Artificial Intelligence Act in May 2024. The legislative process began in April 2021, when the European Commission published its initial proposal. On August 1, 2024, the Act entered into force — making the EU the first jurisdiction in the world to establish a legally binding AI framework across sectors.

Importantly, this is not a voluntary code of conduct. It is hard law, backed by defined penalties and designated enforcement authorities. Think of the EU AI Act as the GDPR of artificial intelligence. Just as GDPR set a global baseline for data protection, the AI Act sets a global baseline for responsible AI development and deployment.

The regulation takes a risk-based approach. Consequently, your compliance burden depends directly on how much potential harm your AI system could cause. Most AI use cases — entertainment recommendations, predictive maintenance tools, and content optimization software — face minimal obligations. However, AI systems that make consequential decisions about people face strict requirements.

Who Does the EU AI Act Apply To?

The Act applies to providers (organizations that develop or place AI systems on the market), deployers (organizations that use AI professionally), importers, and distributors operating within or serving the EU. Critically, your company’s location does not exempt you from these obligations.

The extraterritorial scope is one of the most misunderstood features of the Act. If your company operates from the United States, United Kingdom, Singapore, or anywhere outside Europe, but your AI system affects individuals in EU member states, you must comply. This is the same jurisdictional logic that made GDPR a global compliance requirement.

However, there are limited exceptions. AI developed solely for military purposes, pure scientific research, and personal non-professional use falls outside the Act’s scope. For any commercial AI deployment touching the EU, though, compliance is mandatory.

The Complete EU AI Act Implementation Timeline

The EU AI Act rolls out in phases. Understanding this timeline is essential for planning your compliance program. Missing an earlier deadline can compound your exposure as later deadlines arrive.

| Deadline | What Takes Effect | Who Is Primarily Affected |

|---|---|---|

| August 1, 2024 | EU AI Act enters into force. Awareness and preparation phase begins. | All businesses with AI exposure in the EU |

| February 2, 2025 | Prohibited AI practices become illegal and enforceable. | All providers and deployers globally |

| August 2, 2025 | GPAI model obligations, AI literacy requirements, and governance rules take effect. | GPAI providers; all businesses using AI |

| August 2, 2026 ⚠ | High-risk AI systems (Annex III) must be fully compliant. | All providers and deployers of Annex III high-risk AI |

| August 2, 2027 | High-risk AI embedded in regulated products (Annex I) must comply. | Medical devices, machinery, vehicles with AI components |

The August 2, 2026 deadline affects the broadest range of businesses. AI systems in hiring, education, credit decisions, healthcare, and critical infrastructure must all achieve full compliance by this date. Five months is tight — but achievable if you start immediately.

The Risk Classification System: Where Does Your AI System Fall?

Before investing in compliance activities, every business must answer one foundational question: What risk tier does my AI system belong to? Your answer determines your compliance obligations, your timeline, and your penalty exposure. Therefore, getting this classification right is the single most important first step.

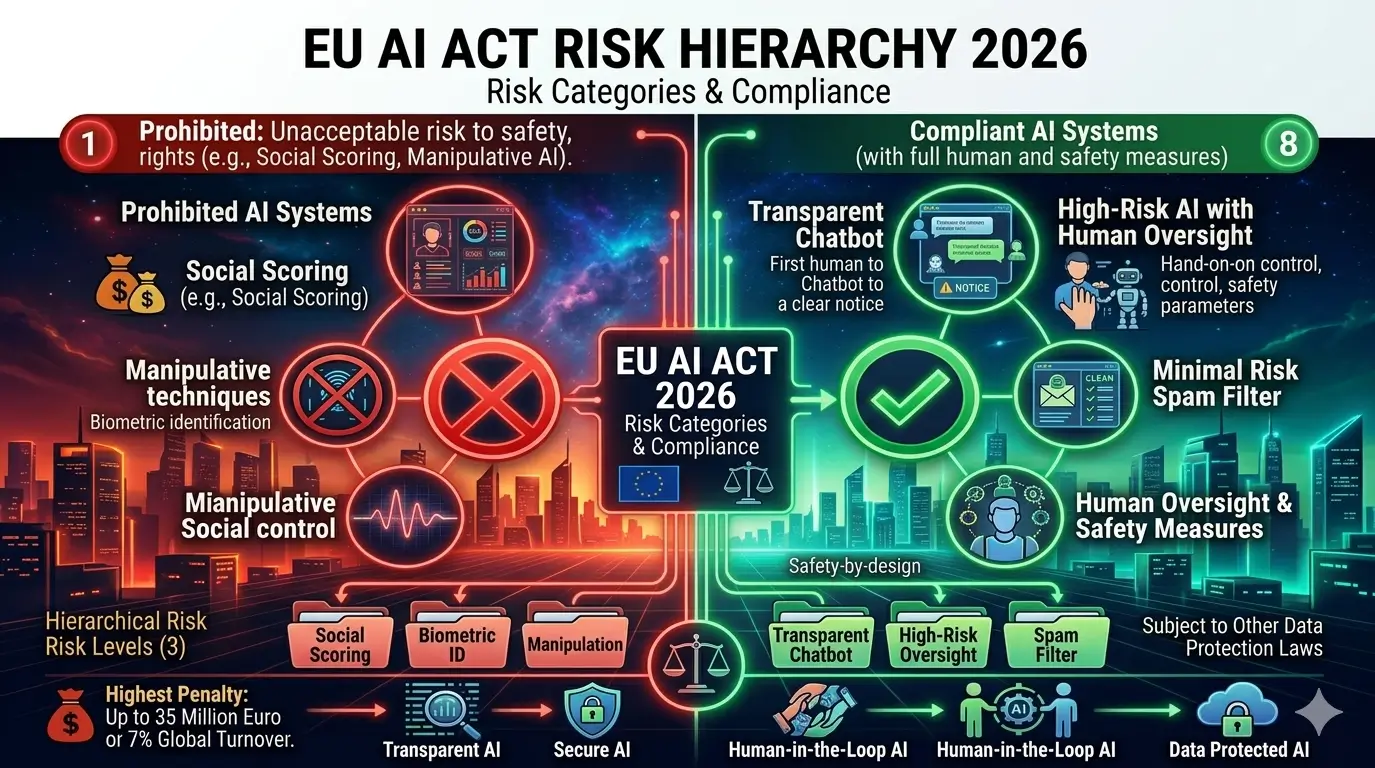

Tier 1: Unacceptable Risk — AI Practices That Are Now Banned

The highest tier covers AI applications the EU considers inherently unacceptable. These practices were banned as of February 2, 2025. If your organization uses any of the following, you must stop immediately.

Specifically, prohibited practices include AI that manipulates people through subliminal techniques or exploits psychological vulnerabilities. Additionally, social scoring systems used by public authorities are banned outright. Real-time facial recognition in public spaces is also prohibited, with only narrow law enforcement exceptions under strict judicial oversight.

Furthermore, predictive policing AI that profiles individuals based on protected characteristics is illegal. AI systems that scrape facial images from the internet to build recognition databases without consent are also banned. Violations carry the highest penalty: up to €35 million or 7% of global annual turnover.

Tier 2: High-Risk AI — The Core of the August 2026 Deadline

High-risk AI systems pose significant risks to health, safety, or fundamental rights. However, their benefits — when properly governed — outweigh those risks. Consequently, they are not banned. Instead, they face strict regulation. This tier represents the central compliance challenge for most businesses before August 2026.

High-risk AI systems fall into two groups. First, Annex I covers AI embedded in products already regulated under EU safety law — such as medical devices, machinery, and automotive systems. Second, and more broadly, Annex III covers eight application sectors driving the August 2026 deadline:

- Biometric identification and categorization of natural persons

- Critical infrastructure management (electricity grids, water systems, traffic management)

- Education and vocational training (AI that determines access or evaluates students)

- Employment and workforce management (CV screening, performance monitoring, promotion decisions)

- Access to essential private and public services (credit scoring, insurance, social benefits)

- Law enforcement (risk assessment tools, polygraph-like technologies)

- Migration, asylum, and border management

- Administration of justice and democratic processes

Importantly, not every AI system in these sectors automatically qualifies as high-risk. The Act targets AI that makes or influences consequential decisions about individuals. For example, a scheduling tool in a hospital is likely minimal risk. By contrast, an AI assisting in clinical diagnosis is almost certainly high-risk.

Tier 3: Limited Risk — Transparency Is the Key Obligation

Limited-risk AI systems face lighter requirements. The focus here is on transparency — ensuring users know when AI is involved in interactions or decisions affecting them.

Specifically, chatbots and virtual assistants must disclose their AI nature to users. AI that generates synthetic content — including deepfakes — must clearly label that content as AI-generated. Moreover, emotion recognition systems used commercially must inform individuals when their emotions are being assessed. Many marketing, customer service, and content creation tools fall into this tier.

Tier 4: Minimal Risk — The Majority of AI Use Cases

Most AI systems in commercial use today fall here and face no mandatory compliance obligations. AI-powered spam filters, entertainment recommendations, inventory optimization tools, and predictive maintenance software all belong in this category. Additionally, most enterprise analytics AI features fall here as well.

Voluntary adherence to EU codes of conduct is encouraged but not legally required. Therefore, if your AI clearly falls into this tier, you can focus resources on any systems that do carry compliance obligations.

General Purpose AI (GPAI): The New Category for Foundation Models

The EU AI Act introduces a distinct category for General Purpose AI models — systems trained on broad data that handle a wide range of tasks. This includes large language models (LLMs) and multimodal foundation models. GPAI obligations have been in effect since August 2025.

All GPAI providers must produce technical documentation and comply with EU copyright law on training data. Additionally, providers of models with systemic risk — defined as those trained using more than 10²⁵ FLOPs — face further obligations. These include mandatory adversarial testing (red-teaming), real-time incident reporting to the European AI Office, and energy consumption reporting.

| Risk Tier | Common Examples | Primary Obligations | Max Penalty |

|---|---|---|---|

| Unacceptable | Social scoring, real-time biometrics in public, subliminal manipulation | Complete prohibition — stop immediately | €35M / 7% global turnover |

| High Risk | CV screening AI, credit scoring, medical diagnostic AI, student assessment tools | Full 7-requirement framework, conformity assessment, CE marking, EU database registration | €15M / 3% global turnover |

| Limited Risk | AI chatbots, deepfake generators, emotion recognition in marketing | Transparency and disclosure obligations | €7.5M / 1.5% global turnover |

| Minimal Risk | Spam filters, recommendation engines, process automation AI | Voluntary codes of conduct | No mandatory penalty |

| GPAI (Systemic Risk) | Large language models (GPT-class, Gemini-class), multimodal foundation models | Technical documentation, red-teaming, incident reporting, copyright compliance | €15M / 3% global turnover |

The 7 Core Compliance Requirements for High-Risk AI Systems

If your AI system qualifies as high-risk under Annex III, you must satisfy seven distinct compliance requirements before August 2, 2026. Each requirement demands genuine organizational investment — in documentation, process design, technical testing, and governance. There is no shortcut. Here is what each requirement means in practice.

Requirement 1: Risk Management System

Every high-risk AI system must operate under a documented, continuous risk management process. This process covers the entire lifecycle — from initial development through active deployment, ongoing monitoring, and eventual decommissioning. Importantly, this is not a one-time compliance event. You must update it whenever the AI system changes or new risks emerge.

In practice, your risk management system must identify and catalogue all known and foreseeable risks, estimate their likelihood and severity, document mitigation measures, and track residual risks in real-world conditions. Consequently, you will need a formal AI Risk Register with named accountability for risk ownership and a quarterly review schedule for active systems.

Additionally, your risk assessment must specifically address vulnerable groups. If children, people with disabilities, or minority communities may disproportionately interact with your AI system, you need explicit risk assessments for those populations.

Requirement 2: Data Governance and Data Quality

Your training, validation, and testing data must meet rigorous quality standards. Specifically, your data governance practices must address the origin and provenance of all data sources, potential biases in training data, and whether the data suits the intended deployment context.

In concrete terms, you must document where your training data came from, how you collected it, and what preprocessing you applied. Furthermore, you must show how representative the data is of the real-world population your AI will serve.

Bias assessments are a requirement, not an optional best practice. You must test whether your model performs differently across gender, age, ethnicity, nationality, and other protected characteristics. Tools such as Weights & Biases, MLflow, or DVC support this process and align well with EU AI Act data governance requirements.

Requirement 3: Technical Documentation

Before placing a high-risk AI system on the EU market, you must prepare comprehensive technical documentation in line with Annex IV of the Act. Think of this as your AI system’s complete regulatory dossier — the record an enforcement authority could request at any time.

Required elements include a full system description and intended purpose, design specifications and architecture, training methodology and datasets, validation and testing results across demographic groups, monitoring and logging procedures, cybersecurity measures, and deployer instructions for use.

Treat this as a living document, not a static report. Every significant model update — fine-tuning, dataset changes, architectural revisions — requires updating the documentation. Many compliance teams manage these as versioned records in platforms like Confluence or dedicated GRC tools, linked directly to deployment pipelines.

Requirement 4: Record-Keeping and Automatic Logging

High-risk AI systems must automatically log events and operational data throughout their lifetime. These logs must support post-hoc auditing — especially in the event of an incident, a regulatory investigation, or a legal dispute.

At minimum, logs must capture the time of each operation, the input data or a secure identifier, the system’s output, and the identity of human operators who reviewed or acted on results. Log retention periods typically align with the system’s operational lifespan. For high-risk applications with long-term consequences, a minimum of 10 years is generally expected.

Requirement 5: Transparency and Information for Deployers

As a provider of a high-risk AI system, you must supply deployers with clear instructions for use. These instructions must specifically address the system’s intended purpose and scope, known performance limitations and error rates, demographic subgroups where accuracy may vary, and circumstances in which users should not rely on the system.

Additionally, instructions must enable deployers to meet their own human oversight obligations and guide them in monitoring for unexpected behavior. This requirement creates a supply chain of accountability. As a deployer, you bear responsibility for using AI within its documented purpose.

Therefore, if a provider has not given you adequate instructions, formally request them and document that request. Regulators assess deployer compliance partly on whether you had sufficient information — and whether you acted on it.

Requirement 6: Human Oversight Measures

This requirement carries the most significant operational implications. High-risk AI systems must enable effective, meaningful human oversight. Humans must understand what the system does, monitor it in real time, intervene and override outputs, and consciously decide not to act on AI results in specific cases.

In practice, consequential decisions — hiring, credit approvals, medical diagnoses, educational assessments — cannot be fully automated when driven by high-risk AI. You must design a documented human review step with real decision-making authority. “Human in the loop” must be genuinely meaningful, not a rubber stamp.

This has direct product design implications. Systems that route high-stakes decisions through AI without a human review point need redesigning before August 2026. The investment is real. However, the cost of regulatory action for eliminating meaningful human oversight is significantly higher.

Requirement 7: Accuracy, Robustness, and Cybersecurity

High-risk AI systems must achieve appropriate accuracy for their intended purpose, demonstrate robustness against adversarial manipulation, and maintain strong cybersecurity throughout their lifecycle. You must document, test, and actively maintain all three properties.

Specifically, accuracy metrics must appear in technical documentation alongside honest acknowledgment of their limitations. Robustness testing means deliberately feeding the system manipulated inputs to verify it resists incorrect outputs. Furthermore, cybersecurity measures must address AI-specific attack vectors: data poisoning, model evasion, and model extraction.

“The combination of accuracy benchmarks, adversarial robustness testing, and cybersecurity requirements means that EU AI Act compliance is not just a legal exercise — it is a rigorous engineering quality standard.”

— European AI Office Technical Guidance, 2025

Industry-Specific Compliance: What Your Sector Must Do Before August 2026

The EU AI Act applies consistently across sectors. However, the practical compliance path looks very different depending on your industry. Regulatory overlaps, existing frameworks, and sector-specific risk profiles all shape what “compliant” means in practice. Here is what each major sector needs to prioritize.

Healthcare and MedTech: The Dual Compliance Challenge

AI systems in clinical contexts — diagnostic imaging algorithms, clinical decision support tools, drug interaction checkers, and patient risk stratification systems — almost universally qualify as high-risk. Moreover, many also fall under the EU Medical Device Regulation (MDR) or In Vitro Diagnostic Regulation (IVDR), creating a dual compliance obligation.

Fortunately, the EU AI Act deliberately aligns with these frameworks. AI systems that already passed conformity assessment under MDR or IVDR satisfy several AI Act requirements automatically. However, meaningful gaps remain. Specifically, the AI Act adds data governance documentation requirements and expanded human oversight provisions not covered by MDR or IVDR.

As a priority action, map every AI clinical tool against both frameworks. Then identify the gap between MDR/IVDR compliance and AI Act requirements. Finally, close those gaps — particularly on training data bias documentation and human override protocol design.

HR Technology and Recruitment: The Highest-Scrutiny Sector

AI used in employment decisions is explicitly listed as high-risk in Annex III. This covers CV screening, interview analysis, performance monitoring, promotion recommendations, and termination risk scoring. Additionally, enforcement authorities in Germany, France, and the Netherlands have indicated HR AI will be among the first sectors targeted post-August 2026.

Case Study: Early Compliance as a Competitive Advantage

Ad Slot: rectangle

Isi NEXT_PUBLIC_ADSENSE_CLIENT & AD_SLOTS

A European HR-Tech SaaS Company (Illustrative Scenario)

A 150-person HR technology company serving enterprise clients across Germany, France, and the Netherlands recognized in mid-2025 that their AI-powered performance review tool qualified as high-risk under Annex III. Rather than waiting, they launched a structured compliance initiative in Q3 2025.

The program took eight months and cost approximately €180,000 in legal, technical, and consultancy resources. As a result, they achieved full compliance certification by March 2026. Consequently, two major enterprise clients that had paused contract renewals signed 3-year agreements within weeks of receiving the compliance certificate.

Key takeaway: For B2B AI companies, early compliance generates revenue — it is not just a cost center. Enterprise procurement teams now require EU AI Act compliance documentation as a vendor selection condition.

If you provide HR AI tools, your enterprise clients will increasingly require compliance certificates as a contract prerequisite. Therefore, building your program now protects existing revenue while creating a competitive differentiator in sales cycles.

Fintech and Banking: Navigating Overlapping Regulatory Frameworks

Credit scoring AI, loan processing tools, fraud detection models, and anti-money laundering systems all qualify as high-risk. Furthermore, the compliance picture for fintech is complex because of regulatory overlap with DORA (Digital Operational Resilience Act), the Capital Requirements Regulation, and EBA model risk guidelines.

Financial institutions with mature model governance frameworks have a significant head start. The frameworks share conceptual overlap: both EBA guidelines and the EU AI Act emphasize documentation, validation, bias testing, and independent review. However, the requirements are not identical. A structured gap analysis is essential before assuming your existing framework satisfies AI Act obligations.

EdTech and Educational Institutions

AI systems that determine access to educational programs, assess or grade students, monitor student behavior, or make progression decisions are all high-risk. Consequently, EdTech companies and universities serving EU institutions must act now.

Specifically, the most critical requirement in education is meaningful transparency. Students must understand when AI influences their assessment outcomes. Furthermore, they must have a genuine right to human review of any AI-generated decision affecting their educational path.

Therefore, any student-facing AI that generates grades or progression recommendations without a documented human review step represents a clear compliance gap you must close before August 2026.

SaaS Providers and B2B AI Tools: Understanding the Provider vs. Deployer Divide

The EU AI Act draws a clear legal line between providers and deployers. As a SaaS provider building AI into your platform, you carry provider obligations: technical documentation, conformity assessment, and instructions for use to your customers. Your customers — the deployers — carry their own obligations: using the AI within its documented purpose and maintaining human oversight.

However, there is an important nuance. If your deployer customers use your AI tools in a high-risk context you did not design for, high-risk obligations can still apply. For example, if a healthcare company uses your general-purpose document analysis AI for clinical documentation, the deployer may trigger high-risk obligations. Both parties share responsibility in that scenario.

As a result, contractual clarity about permitted and prohibited use cases is essential. Review and update your vendor agreements to define the provider-deployer responsibility split explicitly before August 2026.

Penalties and Enforcement: The Real Cost of Non-Compliance

Building an internal business case for compliance investment requires quantifying the risk of inaction. Consequently, this section covers not only the financial penalty structure but also the broader consequences that penalty tables alone do not capture.

The Three-Tier Penalty Structure

| Violation Category | Maximum Fine | Illustrative Scenarios |

|---|---|---|

| Prohibited AI practices | €35 million OR 7% of global annual turnover | Deploying real-time biometric surveillance; using social scoring AI; running manipulative AI targeting psychological vulnerabilities |

| High-risk AI non-compliance | €15 million OR 3% of global annual turnover | Missing technical documentation; no conformity assessment; absent human oversight; non-registration in EU AI database |

| Incorrect or misleading information | €7.5 million OR 1.5% of global annual turnover | Providing false compliance documentation to notified bodies or market surveillance authorities |

For all company sizes, the percentage-of-turnover calculation makes penalties scale with commercial impact. For instance, a startup with €8 million in annual revenue faces a maximum of €560,000 for a Tier 1 violation. By contrast, an enterprise with €2 billion in global revenue faces up to €140 million for the same violation. Therefore, large organizations with significant EU exposure face the most acute urgency.

How Enforcement Works: National and EU-Level Authorities

Enforcement operates at two levels. The European AI Office, within the European Commission, oversees GPAI model compliance and coordinates cross-border enforcement. Additionally, each EU member state must designate one or more National Competent Authorities (NCAs) for market surveillance within their territory.

Several NCAs are already operational. Germany designated the Federal Network Agency (Bundesnetzagentur) as its primary AI authority. France’s CNIL expanded its mandate to cover AI regulation. Spain established AESIA in 2024 — the EU’s first dedicated AI regulator. As a result, enforcement capacity across the EU is growing significantly ahead of the August 2026 deadline.

Enforcement priorities in the initial post-deadline period focus on the highest-impact sectors first: HR AI, credit scoring systems, and healthcare AI. These sectors touch the most EU citizens, so regulators will pursue them before others.

Beyond Fines: The Hidden Costs That Matter Most

Financial penalties are only part of the non-compliance risk. Several additional consequences can prove more operationally damaging than the fines themselves.

Market withdrawal orders represent the most severe operational outcome. Regulators can require a non-compliant AI system to leave the EU market entirely, stopping EU-derived revenue with immediate effect. For software businesses with 20–40% EU revenue, this outcome could be existential.

Furthermore, commercial procurement barriers are already materializing before August 2026. Enterprise procurement teams in banking, insurance, healthcare, and government now include EU AI Act compliance in RFP processes and vendor due diligence checklists. Being identified as non-compliant creates commercial headwinds that far outlast any regulatory action.

Moreover, civil liability adds a third dimension. The AI Liability Directive — a companion regulation under finalization — creates clearer legal pathways for individuals harmed by non-compliant AI to seek civil compensation. This creates tort litigation exposure entirely separate from regulatory fines, and potentially far more costly for systems that caused widespread harm.

Your 90-Day EU AI Act Compliance Action Plan

If your organization has not yet launched a structured EU AI Act compliance program, start today. An imperfect program launched now is categorically more valuable — legally and commercially — than a perfect one that begins after the deadline. Here is a practical three-phase sprint to get your business into a defensible compliance position.

Phase 1 (Days 1–30): AI Inventory and Risk Classification

You cannot comply with obligations you have not mapped. Therefore, your first 30 days must focus entirely on building a complete, accurate picture of your AI landscape. This inventory is the foundation of everything else — rushing it creates compounding problems downstream.

First, assemble a cross-functional AI Inventory Team. Include Legal, Engineering, Product, HR, Finance, and Business Operations. Then systematically catalogue every AI system your organization develops, deploys, licenses from a third party, or uses operationally.

For each system, answer these key questions: What decisions does it influence? Who does it affect? Does it fall into any Annex III category? Does it touch EU citizens? Where classification is genuinely uncertain, default to the higher tier. Regulators treat good-faith over-classification far more sympathetically than deliberate under-classification.

✓ Phase 1 Compliance Checklist (Days 1–30)

- Complete AI systems inventory across all departments and business units

- Document the purpose, data inputs, decision outputs, and affected users of each system

- Classify every system: Unacceptable / High-Risk / Limited / Minimal / GPAI

- Identify EU market exposure — which systems affect EU citizens or EU-based deployers

- Flag any prohibited AI practices for immediate remediation action

- Prioritize high-risk systems for the compliance program based on impact and timeline

- Appoint a named AI Compliance Lead or establish an AI Governance Committee

- Brief senior leadership on scope and resource requirements

Phase 2 (Days 31–60): Documentation, Governance, and Gap Analysis

With your inventory and classification complete, Phase 2 focuses on building compliance infrastructure. This includes technical documentation, governance structures, data quality assessments, and a systematic gap analysis against each of the seven high-risk requirements. Expect this phase to demand significant time from Engineering, Legal, and Product leadership simultaneously.

For each high-risk AI system, begin drafting the Annex IV technical documentation. In parallel, conduct a structured gap analysis. For each of the seven requirements, honestly assess your current state and the gap to full compliance. Document this formally — it becomes both your roadmap and evidence of good-faith effort if regulators audit you.

Additionally, commission a data governance review for each high-risk system’s training data. Review provenance, document quality issues, and initiate bias assessment across protected demographic characteristics. If significant bias issues emerge, address them now — before conformity assessment. Attempting to pass a conformity assessment with known unaddressed bias is both a regulatory risk and an ethical failure.

Finally, engage external EU AI Act legal counsel to review your gap analysis and advise on your conformity assessment pathway. Determine whether your systems qualify for self-assessment or require a notified body.

✓ Phase 2 Compliance Checklist (Days 31–60)

- Draft Annex IV technical documentation for each high-risk system

- Complete formal gap analysis against all 7 compliance requirements

- Initiate training data provenance review and bias assessment

- Establish data lineage documentation for all high-risk AI training datasets

- Draft AI Risk Management System documentation

- Design and document human oversight protocols for each high-risk workflow

- Engage qualified external EU AI Act legal / compliance advisor

- Determine conformity assessment pathway: self-assessment vs. notified body

- Review and update AI-related vendor contracts for deployer/provider clarity

- Begin ISO/IEC 42001 AI Management System alignment if pursuing certification

Phase 3 (Days 61–90): Testing, Conformity Assessment, and Registration

The final phase moves from documentation to validation. Here you test systems against the Act’s technical requirements, complete conformity assessment, and finish regulatory registration. This phase also includes the internal training that makes compliance sustainable after the deadline.

Start by executing comprehensive technical testing. Specifically, run accuracy benchmarking across demographic subgroups, robustness testing against adversarial inputs, and cybersecurity vulnerability assessment. Document all results — these form part of your Annex IV dossier. Remediate any failures before proceeding to conformity assessment.

Next, complete your conformity assessment. For most Annex III systems, self-assessment is permitted — you assess compliance internally and sign a Declaration of Conformity. However, AI embedded in Annex I regulated products may require third-party assessment by an accredited notified body. Apply CE marking where applicable and register your system in the EU AI database.

Finally, train your operational teams. The compliance program only succeeds if deployers and monitors understand their obligations — how to exercise meaningful oversight, what constitutes a reportable incident, and how to document unusual behavior. Ongoing compliance is a process, not a one-time event.

✓ Phase 3 Compliance Checklist (Days 61–90)

- Complete accuracy, robustness, and cybersecurity technical testing

- Document all test results and any remediation actions taken

- Complete conformity assessment (self-assessment or notified body)

- Sign EU Declaration of Conformity for each compliant high-risk system

- Apply CE marking where applicable to products

- Register high-risk AI systems in the EU AI database (where required)

- Deploy logging and automated monitoring infrastructure

- Train operational and deployment teams on human oversight requirements

- Establish ongoing compliance review schedule (quarterly recommended)

- Communicate compliance status formally to key customers and partners

- Set up incident reporting process to the relevant National Competent Authority

Frequently Asked Questions About EU AI Act Compliance

These are the questions compliance teams, business leaders, and legal departments most commonly ask. Each answer is written to be directly actionable and structured to appear prominently in Google’s People Also Ask and featured snippet results.

Does the EU AI Act apply to companies outside the European Union?

Yes — the EU AI Act has broad extraterritorial scope. Any company, regardless of where it operates, must comply if its AI systems are used by people in EU member states. This applies to businesses based in the United States, United Kingdom, Canada, Japan, and every other non-EU country.

Specifically, if you have EU customers — business or consumer — who interact with or are affected by your AI systems, you are in scope. The jurisdictional principle is identical to GDPR: access to the European market requires compliance with European law.

What is the penalty for not complying with the EU AI Act?

Penalties follow a three-tier structure based on violation severity. First, deploying prohibited AI systems results in fines up to €35 million or 7% of global annual turnover, whichever is higher. Second, non-compliance with high-risk AI requirements — such as missing documentation or absent human oversight — carries fines up to €15 million or 3% of global annual turnover.

Third, providing incorrect or misleading information to regulatory authorities is subject to fines up to €7.5 million or 1.5% of global annual turnover. Furthermore, beyond financial penalties, regulators can order the complete withdrawal of non-compliant AI systems from the EU market.

What exactly does the August 2026 deadline require businesses to do?

By August 2, 2026, all providers and deployers of high-risk AI systems listed in Annex III must achieve full compliance. Specifically, this means completing your risk management system and documenting it, finalizing all Annex IV technical documentation, and completing conformity assessment with a signed Declaration of Conformity.

Additionally, you must register your system in the EU AI database where required, apply CE marking where applicable, and deploy logging and monitoring capabilities. Human oversight protocols must be in place, and your staff must be trained on them. Systems that enter the EU market after August 2, 2026 without meeting these requirements are non-compliant from day one.

What is the difference between the EU AI Act and GDPR?

GDPR and the EU AI Act are distinct but complementary regulations. GDPR governs the collection, processing, storage, and protection of personal data. The EU AI Act governs the development, deployment, and operation of AI systems. Both frequently apply to the same product simultaneously.

The EU AI Act does not replace GDPR. Rather, it adds AI-specific obligations on top of the existing data protection framework. Consequently, companies should treat both as overlapping compliance domains requiring separate but coordinated programs.

Do startups and small businesses need to comply with the EU AI Act?

Yes — but the Act includes specific support measures for smaller businesses. Micro-enterprises (fewer than 10 employees, under €2 million turnover) and small enterprises (fewer than 50 employees, under €10 million turnover) benefit from simplified conformity assessment procedures. Additionally, EU member states must provide regulatory sandboxes to help SMEs test compliance approaches.

However, the substantive requirements — risk management, technical documentation, human oversight, and conformity assessment — apply in full regardless of company size. Being small provides procedural accommodations for meeting the obligations. It does not exempt you from them.

Is my company required to register AI systems with the EU AI database?

Providers of high-risk AI systems listed in Annex III must register before placing systems on the EU market. Registration requires submitting the system’s identifying information, a summary of its intended purpose, the conformity assessment procedure completed, and contact information for the provider or authorized EU representative.

Importantly, the EU AI database is publicly accessible for most registrations. As a result, competitors, customers, and the general public can verify whether your system is registered and compliant. Deployers of high-risk AI in sensitive public-sector contexts also carry their own registration obligations, separate from those of providers.

Conclusion: The Businesses That Act Now Will Lead — The Rest Will Scramble

Five Priorities You Must Act On Today

The EU AI Act is the most consequential technology regulation since GDPR. Its August 2026 deadline is a hard legal line, not a soft target. Consequently, businesses that move now will be far better positioned than those still waiting.

First, conduct your AI inventory and risk classification — you cannot address obligations you have not mapped. Second, immediately stop any AI practices that fall into the prohibited category, since every additional day of use compounds your legal exposure. Third, for every high-risk AI system, launch your compliance program against the seven requirements now.

Additionally, review your AI vendor relationships to ensure your deployer-provider responsibility split is contractually clear. Finally, assign formal ownership of AI compliance to a named leader in your organization. This cannot be treated as a background IT project.

Compliance as a Competitive Advantage

The EU AI Act is genuinely complex. However, it is also structured, specific, and navigable. The compliance path is clear for any business that engages with it seriously.

Furthermore, organizations that achieve compliance before August 2026 do not simply avoid penalties. They become the AI partners that regulated industry customers trust, that sophisticated enterprise buyers prefer, and that regulators cite as the standard others should meet. As a result, early compliance is not just a legal obligation — it is a strategic investment.

Your compliance journey begins with a single step: open a spreadsheet and start your AI inventory today.

Start Your EU AI Act Compliance Journey Today

Download our free 50-point EU AI Act Compliance Checklist — a practical audit tool covering all seven requirements for high-risk AI systems, built for compliance teams, CTOs, and legal departments.

Ad Slot: leaderboard-2

Isi NEXT_PUBLIC_ADSENSE_CLIENT & AD_SLOTS

Share this article