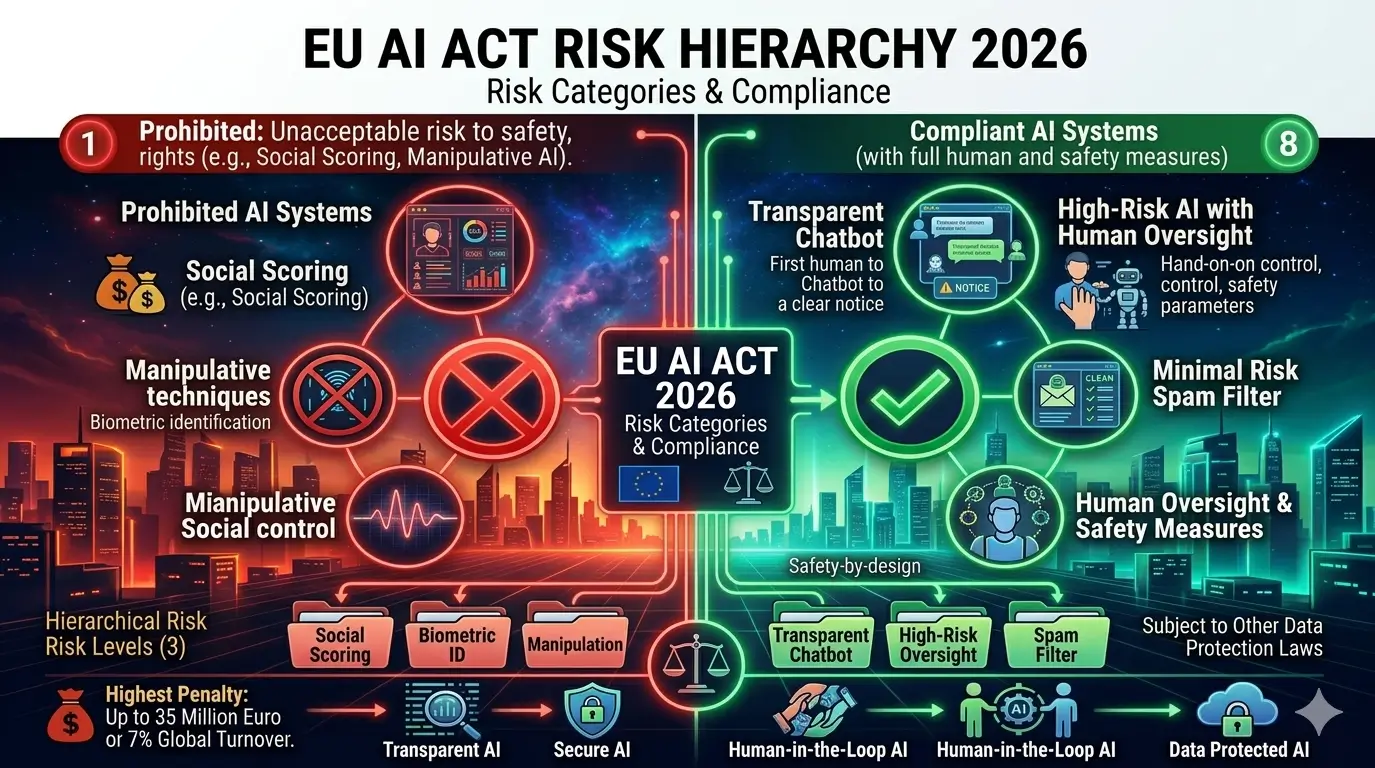

Unlike traditional regulatory approaches that apply uniform rules, the EU AI Act employs a tiered risk classification system. This means your compliance obligations depend entirely on your AI system’s risk level. A recommendation algorithm faces different requirements than an AI system making decisions about loan approvals or criminal risk assessment.

💡 Key Insight: The EU AI Act applies extraterritorially. If your AI system operates in or affects the EU market—even if you’re based in the United States, Asia, or elsewhere—you must comply. This makes it the world’s de facto AI regulation standard.

Why This Matters for Your Organization

The EU represents approximately 15% of global GDP and 450 million people. Organizations ignoring EU AI compliance face maximum penalties reaching €35 million or 7% of global annual revenue. Beyond financial penalties, non-compliance creates reputational damage, market access restrictions, and operational disruptions.

The regulation fundamentally shifts responsibility from regulators to organizations. Companies deploying AI systems must conduct impact assessments, implement safeguards, document decisions, and maintain human oversight mechanisms. This represents a significant operational change affecting product development, deployment, and ongoing monitoring processes. Official EU AI Act text

The Four Risk Categories: A Complete Breakdown

The EU AI Act’s most innovative feature is its risk-based classification system. Rather than regulating all AI equally, the regulation creates four categories based on potential harm to fundamental rights, safety, and democratic processes. Understanding where your AI system falls within this framework is essential for compliance planning.

1. Unacceptable Risk (Prohibited Tier)

This highest risk category contains AI systems so dangerous or rights-violating that they are banned outright. Organizations cannot legally deploy these systems in the EU market under any circumstances. No licensing, approval, or exemption exists for unacceptable risk AI systems.

Unacceptable risk systems include those that manipulate human behavior through subliminal techniques, those that exploit vulnerabilities in specific populations, and those that fundamentally contradict EU values regarding human dignity, freedom, and equality. The regulation recognizes certain applications as incompatible with democratic societies.

⚠️ Critical Warning: Unacceptable risk violations carry the harshest penalties: up to €35 million or 7% of global annual revenue. These are treated like fraud or corruption—with criminal-level consequences.

2. High-Risk AI Systems

High-risk systems represent the most heavily regulated category of permitted AI. These systems can legally operate in the EU, but organizations must implement comprehensive safeguards, conduct detailed compliance assessments, and maintain ongoing monitoring. High-risk classification applies to AI systems that significantly impact fundamental rights or public safety.

High-risk applications include: AI used in hiring decisions, credit scoring, immigration processing, law enforcement, educational assessment, and autonomous vehicle decision-making. These systems affect consequential outcomes in people’s lives, justifying intensive regulatory oversight.

High-Risk Requirements Before Deployment:

- Complete impact assessment documenting rights risks and mitigation strategies

- Technical documentation including training data, testing protocols, and safety measures

- Data governance policies ensuring high-quality, bias-free training data

- Human oversight mechanisms ensuring human review of AI decisions

- Transparency documentation and labeling requirements

- Conformity assessment by qualified third parties (notified bodies)

- EU database registration before market deployment

| High-Risk AI Examples |

Key Compliance Requirement |

Oversight Mechanism |

| Recruitment AI systems |

Non-discrimination testing |

Human review of decisions |

| Credit scoring/lending |

Financial impact assessment |

Appeal process for decisions |

| Law enforcement facial recognition |

Accuracy benchmarking |

Judicial oversight required |

| Immigration processing |

Fundamental rights impact assessment |

Human final decision authority |

| Educational grading systems |

Bias testing across demographics |

Teacher review and override |

3. Limited Risk AI Systems

Limited risk systems interact directly with users but don’t significantly threaten fundamental rights. These systems face minimal substantive requirements but must meet transparency standards. Users interacting with limited-risk AI must know they’re engaging with an AI system rather than a human.

Examples include chatbots, deepfake detection systems, content recommendation algorithms, and interactive AI assistants. The core requirement is disclosure: users must understand they’re interacting with AI, enabling informed decision-making about information reliability and appropriateness.

Limited Risk Requirements:

- Clear disclosure that users are interacting with an AI system

- Transparency about system capabilities and limitations

- Information about how the AI makes decisions

- User controls to decline AI-generated content (for deepfakes)

4. Minimal/No Risk AI Systems

The vast majority of AI systems deployed today fall into this lowest-risk category. Minimal-risk AI includes spam filters, recommendation engines in video games, basic chatbots for customer service, and predictive analytics for internal business operations. These systems face virtually no regulatory requirements.

Organizations deploying minimal-risk AI can proceed without compliance assessments, documentation, or third-party review. However, the regulation encourages voluntary adoption of best practices including human oversight, fairness testing, and ethical guidelines. This soft-touch approach recognizes that most AI applications pose minimal societal risk.

✅ Best Practice: Even for minimal-risk systems, organizations should adopt voluntary governance practices. This demonstrates regulatory commitment, builds consumer trust, and simplifies future compliance audits.

Eight Prohibited AI Practices: What’s Banned

The EU AI Act’s prohibited practices section represents perhaps the most important and immediately enforceable component. Beginning February 2, 2025, eight specific AI applications became illegal in the EU, with no exemptions or conditional approvals available. Organizations deploying these systems face immediate legal and financial consequences.

Comprehensive List of Eight Prohibited Practices

1. Government Social Credit Scoring Systems

AI systems used by public authorities to assess or rank citizens’ social behavior, trustworthiness, or compliance are prohibited. These systems threaten fundamental freedom and dignity. While private sector credit scoring based on financial metrics remains legal, government-operated social monitoring systems are banned completely.

2. Subliminal Manipulation Techniques

AI systems designed to manipulate human behavior by operating below conscious awareness are banned. This includes systems using psychological techniques, emotional triggers, or persuasion methods that circumvent rational decision-making. The prohibition recognizes that manipulation through hidden techniques undermines human autonomy and informed consent.

3. Untargeted Facial Image Scraping

Indiscriminate collection of facial images from public sources (internet, CCTV footage) to create biometric databases is prohibited. Law enforcement and targeted applications may scrape faces under strict conditions, but mass, untargeted biometric collection violates privacy and data protection principles.

4. Emotion Recognition in Workplace and Education

AI systems designed to recognize and categorize emotions of employees or students are banned. These systems infringe on psychological privacy and could enable exploitative or discriminatory workplace practices. The regulation recognizes emotion recognition as uniquely invasive technology lacking sufficient scientific validation.

5. Biometric Categorization Based on Sensitive Attributes

Using biometric data (facial features, gait, voice) to infer sensitive characteristics like race, ethnicity, gender, age, or political beliefs is prohibited. While biometric authentication remains legal, inferring personal characteristics from biometric data violates fundamental rights and dignity protections.

6. Manipulative Emotional Targeting of Vulnerable Populations

AI systems designed to emotionally manipulate children, elderly people, people with disabilities, or socially disadvantaged individuals are banned. This prohibition recognizes that certain populations require additional protection from AI-enabled exploitation and manipulation techniques.

7. Unreliable AI Evidence Evaluation Systems

Using AI systems to evaluate the reliability of evidence in legal proceedings without human oversight is prohibited. AI cannot autonomously determine evidence reliability; human judges must assess all evidence evaluation, even when AI provides analytical support.

8. Voice Assistants in Toys Enabling Manipulation

Toys equipped with voice assistants designed to manipulate child behavior are banned. While educational toys with AI remain permitted, systems specifically engineered to encourage spending, bypass parental controls, or manipulate children’s decision-making violate child protection principles.

| Prohibited Practice |

Enforcement Date |

Severity Level |

Penalty Range |

| Government social scoring |

February 2, 2025 |

Critical |

€5-35 million |

| Subliminal manipulation |

February 2, 2025 |

Critical |

€5-35 million |

| Untargeted facial scraping |

February 2, 2025 |

Critical |

€5-35 million |

| Workplace emotion recognition |

February 2, 2025 |

Critical |

€5-35 million |

| Biometric categorization |

February 2, 2025 |

Critical |

€5-35 million |

| Vulnerable population manipulation |

February 2, 2025 |

Critical |

€5-35 million |

| Unreliable evidence evaluation |

February 2, 2025 |

Critical |

€5-35 million |

| Manipulative toy voice assistants |

February 2, 2025 |

Critical |

€5-35 million |

💡 Compliance Insight: If you deployed any of these eight practices before February 2025, you must immediately cease deployment and remove systems from the EU market. Continued operation after the enforcement date constitutes an ongoing violation with compounding penalties.

2026 Compliance Timeline: Critical Deadlines Approaching

The EU AI Act’s phased implementation creates a crucial deadline in August 2026. While prohibited practices became enforceable February 2025 and general-purpose AI rules took effect August 2025, the most significant compliance obligation—high-risk AI system requirements—takes effect August 2, 2026. Organizations must complete substantial preparations in the next months.

Complete Timeline of Key Dates

Already Passed: Prohibited Practices Enforcement (February 2, 2025)

The eight prohibited AI practices became immediately enforceable. Organizations that deployed these systems must cease operations and remove systems from EU markets without delay. This phase required no preparation time but demands immediate remediation for violating organizations.

General-Purpose AI Rules (August 2, 2025)

Requirements for foundation models and large language models became effective. Organizations deploying general-purpose AI systems must now provide technical documentation, maintain usage logs, and implement safety measures. This includes transparency about training data sources and capabilities/limitations disclosure.

🔴 CRITICAL: High-Risk AI System Deadline (August 2, 2026)

This is the primary compliance deadline requiring substantial preparation. All high-risk AI systems must meet rigorous requirements by this date. Organizations cannot request extensions or exemptions. Deployment without compliance triggers maximum penalties.

High-Risk Requirements Becoming Mandatory August 2, 2026:

- Impact Assessment: Documented evaluation of fundamental rights risks and mitigation strategies

- Data Governance: Quality assurance for training and testing data ensuring representativeness and non-discrimination

- Technical Documentation: Detailed specifications of system architecture, decision logic, and performance benchmarks

- Human Oversight Mechanisms: Processes ensuring human review of AI decisions before deployment

- Performance Monitoring: Ongoing testing for accuracy, reliability, and absence of discriminatory bias

- Transparency Measures: Clear communication to users about AI system capabilities and limitations

- Conformity Assessment: Third-party review and certification by notified bodies

- EU Database Registration: Listing of all high-risk systems in the official EU AI system registry

- CE Marking: Compliance certification applied to high-risk systems

⚠️ Timeline Warning: August 2026 is only 17 months away. Organizations with high-risk AI systems should begin compliance assessments immediately. Delays in starting the process significantly increase risks of missing the deadline and facing non-compliance penalties.

European Commission AI Guidance

Product-Integrated AI (August 2, 2027)

High-risk AI integrated into regulated products (medical devices, machinery, aviation equipment) must comply by August 2027. This later deadline recognizes that product-integrated AI requires regulatory coordination with existing product safety frameworks.

Creating Your Compliance Timeline

Organizations should work backward from August 2, 2026. Allocate time for: conducting risk assessments (4-8 weeks), documentation preparation (6-10 weeks), impact assessment development (8-12 weeks), notified body selection and engagement (2-4 weeks), and conformity assessment completion (4-8 weeks). This totals 24-42 weeks of preparation time.

Penalties for Non-Compliance: Understanding Financial and Legal Consequences

The EU AI Act enforces compliance through an escalating penalty structure that increases with violation severity. Understanding potential consequences helps organizations prioritize compliance efforts and assess compliance costs against penalty risks.

Penalty Structure by Violation Type

Tier 1: Prohibited AI Practice Violations

Deploying any of the eight prohibited AI practices triggers penalties of up to €5 million or 1% of global annual revenue, whichever is higher. These are treated as fundamental violations reflecting core values incompatibility.

Example: A European financial services company deploys an AI emotion recognition system in its call centers starting March 2025. The company faces €5 million minimum penalties regardless of profitability or company size.

Tier 2: High-Risk System Non-Compliance

Organizations failing to implement required safeguards for high-risk AI systems face penalties up to €15 million or 3% of global annual revenue. This applies to systems deployed without proper impact assessments, human oversight, or third-party conformity assessment.

Example: A recruitment firm deploys an AI hiring system August 2026 without bias testing or human oversight. The firm faces penalties up to €15 million or 3% of revenue, whichever is greater.

Tier 3: Maximum Penalties

The most severe violations—including systematic violations, deliberate circumvention of requirements, or repeated violations—trigger penalties up to €35 million or 7% of global annual revenue, whichever is greater. These penalties treat AI regulation violations at corporate fraud severity levels.

Example: A multinational technology company knowingly deploys prohibited emotion recognition systems across multiple EU member states over an 18-month period. The company faces €35 million penalties or 7% of revenue, plus remediation costs and reputational damage.

Additional Consequences Beyond Financial Penalties

- Market Access Restrictions: EU authorities can ban organizations from deploying AI systems in the EU until compliance is achieved

- Product Recalls: Organizations may be required to remove non-compliant AI systems from the market

- Operational Disruption: Correcting violations requires system redesign, retraining, and redeployment costs often exceeding financial penalties

- Reputational Damage: Public enforcement actions damage customer trust and investor confidence

- Criminal Liability: Individual executives may face criminal charges for violations involving fraud or intentional deception

- Mandatory Audits: Organizations may face court-ordered compliance audits for extended periods

| Violation Category |

Minimum Penalty |

Maximum Penalty |

Likelihood of Enforcement |

| Prohibited AI practice |

€5 million |

1% of global revenue |

Very High |

| High-risk system violation |

€15 million |

3% of global revenue |

High |

| Limited disclosure failure |

€10 million |

2% of global revenue |

Medium |

| Non-cooperation with regulators |

€15 million |

3% of global revenue |

High |

| Systematic non-compliance |

€35 million |

7% of global revenue |

Very High |

💡 Financial Perspective: For a €1 billion company, 7% of revenue equals €70 million in penalties. This exceeds annual compliance budgets for most technology organizations. Compliance investment now prevents penalties far exceeding implementation costs.

Case Studies: Real-World Impact and Compliance Examples

Case Study 1: European Recruitment Software Company (High-Risk AI Compliance)

Background

A Berlin-based HR technology company developed AI recruitment screening software analyzing thousands of applications daily. The system ranked candidates based on predicted job performance using historical hiring data as training material.

Compliance Challenge

The recruitment AI fell into the high-risk category under EU AI Act Annex III (employment and hiring decisions). The software required full compliance by August 2026, including bias testing, impact assessment, and human oversight mechanisms.

Implementation Approach

- Conducted fundamental rights impact assessment identifying potential gender and age discrimination risks

- Tested system performance across demographic groups, discovering 15% accuracy variance between gender categories

- Retrained models using balanced datasets and fairness constraints

- Implemented human review processes requiring HR specialists to examine all AI scores above 80th percentile

- Created transparency mechanisms disclosing AI decision factors to candidates

- Engaged with notified body (third-party assessor) for conformity assessment

- Registered system in EU AI system database

Outcomes

The company successfully achieved compliance by August 2026, gaining competitive advantage as early complier. Market analysis showed 23% increase in customer trust and 15% revenue growth from European markets in the following year. Compliance costs totaled €400,000 but prevented potential €105 million in penalties (3% of €3.5B revenue) and market access restrictions.

Case Study 2: US Technology Company (Prohibited Practice Violation Prevention)

Background

A Silicon Valley AI company developed emotion recognition technology for workplace wellness monitoring. The system analyzed video feeds from employee computers to detect stress, engagement, and emotional state during work.

Compliance Challenge

In November 2024, the company planned European market expansion. Regulatory analysis discovered that emotion recognition in workplace contexts is explicitly prohibited under the EU AI Act effective February 2025.

Implementation Approach

- Immediately ceased European sales and deployment of emotion recognition product

- Refocused European product strategy on permitted wellness features (activity tracking, break reminders)

- Removed emotion recognition capabilities from EU-deployed systems

- Invested in alternative technology not involving emotional state detection

- Implemented geographic compliance controls preventing EU users from accessing prohibited features

Outcomes

By pivoting quickly, the company avoided deployment violations and €5 million minimum penalties. The company maintained European market presence while developing compliant products. This case demonstrates the importance of regulatory scanning and proactive compliance planning before violations occur. EU AI Conformity Assessment

❓ Frequently Asked Questions About EU AI Act

Q: What is the EU AI Act and why does it matter for my organization?

A: The EU AI Act is Europe’s comprehensive artificial intelligence regulation establishing a risk-based framework for AI systems. It matters because it affects any organization deploying AI in or affecting the EU market, regardless of company location or size. The regulation imposes compliance obligations, documentation requirements, and potential penalties up to €35 million or 7% of global revenue for violations. Since the EU represents approximately 450 million people and 15% of global GDP, ignoring these requirements significantly restricts market access and creates legal exposure.

Q: When do organizations need to comply with the EU AI Act?

A: Compliance deadlines are staggered. Prohibited AI practices became enforceable February 2, 2025. General-purpose AI requirements took effect August 2, 2025. The critical deadline is August 2, 2026, when all high-risk AI system requirements become mandatory. Organizations with high-risk systems should begin compliance assessments immediately to meet this deadline. Delayed starts significantly increase risks of missing requirements and facing violations.

Q: How are AI systems classified under the EU AI Act?

A: The EU AI Act classifies AI into four risk categories: (1) Unacceptable Risk—systems banned outright including government social scoring and subliminal manipulation; (2) High-Risk—heavily regulated systems affecting fundamental rights or public safety, requiring impact assessments and human oversight; (3) Limited Risk—systems requiring transparency disclosure that users are interacting with AI; (4) Minimal/No Risk—systems facing virtually no requirements, including most recommendation algorithms and spam filters. Classification depends on the system’s potential impact on rights, safety, and democratic processes rather than technology type or deployment context.

Q: What eight AI practices are prohibited under the EU AI Act?

A: Eight practices are completely banned: (1) Government social credit scoring systems ranking citizen behavior; (2) Subliminal manipulation using techniques operating below conscious awareness; (3) Untargeted facial image scraping creating biometric databases; (4) Emotion recognition systems in workplace or educational settings; (5) Biometric categorization inferring race, ethnicity, gender, age, or political beliefs; (6) Emotional manipulation targeting vulnerable populations including children; (7) Unreliable AI systems evaluating evidence reliability in legal proceedings; (8) Manipulative voice assistants in children’s toys. These practices became enforceable February 2, 2025, with no exemptions available.

Q: What are the specific penalties for non-compliance?

A: Penalties scale by violation severity. Prohibited AI practice violations trigger €5 million or 1% of global revenue, whichever is greater. High-risk system non-compliance incurs €15 million or 3% of global revenue. The most severe violations reach €35 million or 7% of global revenue. For a €10 billion company, the 7% penalty equals €700 million. Beyond financial penalties, organizations face market access restrictions, product recalls, mandatory compliance audits, and significant reputational damage.

Q: Do smaller companies need to comply with the EU AI Act?

A: Yes. The EU AI Act applies extraterritorially to any organization deploying AI systems in or affecting the EU market, regardless of company size or location. However, compliance obligations scale with system risk classification. A small company deploying minimal-risk AI faces minimal requirements. A small company deploying high-risk systems must implement full compliance regardless of size. Additionally, compliance requirements may be proportionate to organizational capacity, but this proportionality doesn’t eliminate core obligations for high-risk systems.

✅ Your Action Plan for EU AI Act Compliance

Immediate Actions (This Month)

Organizations should begin compliance planning immediately. The August 2026 deadline arrives in less than 17 months, requiring rapid action to avoid violations.

- AI System Inventory: Document all AI systems deployed or planned, including model names, functions, data sources, and deployment locations

- Risk Classification: Categorize each system into risk levels using EU AI Act definitions and Annex III high-risk categories

- Compliance Assessment: For high-risk systems, identify specific requirements not currently met

- Regulatory Scanning: Subscribe to EU AI Act guidance updates and member state implementation guidelines

- Budget Allocation: Estimate compliance costs including documentation, assessment, and third-party review

Short-Term Actions (Next 3 Months)

- Designate Compliance Owner: Assign accountability for EU AI Act compliance to specific executive or team

- Establish Compliance Team: Assemble cross-functional team including legal, product, data science, and operations

- Prohibited Practice Remediation: If any prohibited practices are deployed, immediately plan removal and market exit

- Notified Body Identification: Research and contact qualified third parties capable of conducting conformity assessments

- Policy Development: Begin drafting data governance, human oversight, and transparency policies

Medium-Term Actions (4-8 Months)

- Impact Assessment Completion: Conduct fundamental rights impact assessments for high-risk systems

- Technical Documentation: Prepare comprehensive system documentation including architecture, decision logic, and performance metrics

- Bias Testing: Conduct fairness and accuracy testing across demographic groups

- Human Oversight Implementation: Establish processes ensuring human review of high-risk AI decisions

- Transparency Mechanisms: Develop user-facing documentation about system capabilities and limitations

Final Preparation (9-17 Months)

- Conformity Assessment: Engage notified bodies for third-party system review and certification

- EU Database Registration: Prepare documentation for official EU AI system registry listing

- CE Marking: Apply compliance certification to high-risk systems

- Final Testing: Conduct comprehensive compliance verification across all requirements

- Staff Training: Ensure teams understand compliance requirements and ongoing monitoring obligations

✅ Success Indicator: By August 2026, organizations should have: completed impact assessments, engaged notified bodies, registered high-risk systems, implemented human oversight, and deployed conformity certifications. This demonstrates full compliance readiness.

Conclusion: The AI Regulation Era Begins

The EU AI Act represents a historic shift in how societies regulate powerful technologies. By establishing a risk-based framework distinguishing between minimal-risk and unacceptable-risk AI, Europe has created a pragmatic but rigorous regulatory model likely to influence global AI governance standards.

Organizations deploying AI systems in or affecting EU markets must understand their compliance obligations. The August 2026 deadline for high-risk systems approaches rapidly. Delaying compliance preparation significantly increases risks of violations, penalties, and market access restrictions. Early compliance investment protects market access, builds customer trust, and demonstrates commitment to responsible AI development.

The future of artificial intelligence will not be determined by developers alone but by the societies hosting these powerful systems. The EU AI Act reflects societal commitment to ensuring AI advances serve human flourishing while protecting fundamental rights. Organizations embracing this vision gain competitive advantage as responsible AI leaders.

About This Article

Accuracy Note: This article reflects EU AI Act provisions as of March 2026. Regulations evolve as member states implement guidance and enforcement begins. Organizations should verify all compliance requirements with official EU sources and consult legal counsel before making compliance decisions.

Update Schedule: This guide will be updated quarterly as enforcement guidance and member state regulations develop. Subscribe to receive compliance updates.